For one reason or another, there is quite a bit of confusion surrounding the technologies that allow File Sharing to take place on a Windows machine. The h Source: Windows File Sharing: Facing the Mystery Phenomenal article that clarifies Windows File sharing and sheds very clear light on which ports are needed based on what […]

Open VPN Access Server uses NAT (Network Address Translation) to “ease” routing VPN user traffic to the rest of a remote network. This isn’t always a desirable configuration. If you want to disable NAT globally, you can do so by logging into the shell as a root user on your OpenVPN Access Server and doing […]

Sometimes you need to mirror files between a couple of different servers in your windows environment. There are a couple of command-line tools that can be used together to make this a very easy job. To further simplify things, especially if you need to mirror the same files/folders on a recurring basis, you can drop […]

I am just archiving this link for myself (and anyone else that needs this information) as well as the pertinent information therein. Basically if you run multiple OpenVPN servers in your environment you probably need your OpenVPN Connect Client to be able to handle multiple profiles. This isn’t enabled out of the box for the […]

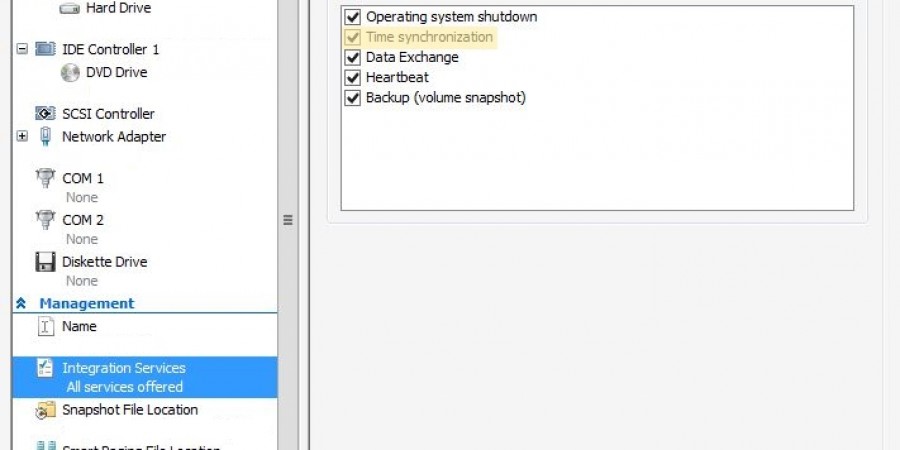

Ran into a fun issue today… I had a pair of Server 2012 R2 servers in a remote office that refused to sync the proper time for their clocks. No matter what I did they were always off by five minutes. One of them was a domain controller for the office. In the process of […]

Heartbleed was a major vulnerability in the SSL protocol used by many many sites and services. Folks have been scrambling to patch it up quickly since it was announced a few days prior. If you are in the process of doing just that for yourself or your organization, you might be so busy fixing websites […]

Most UTM (unified threat management) Firewall devices worth their price tag include a VPN server as part of the mix. In my experience, a UTM is an excellent choice for a small office and/or most smaller enterprises as several of the higher-end devices scale quite far. For a larger, corporate network though, while a UTM […]

Working on something well outside of my experience range recently (typical…), namely Cisco switch administration. In particular, I was working on a Cisco Catalyst 3560 switch which apparently doesn’t have quite as robust of a user-friendly web-gui as I would have liked. A couple years back I setup a SPAN port (aka Mirror port) on […]

Google Authenticator, and (all?) other rotating-pin multi-factor authentication systems, rely on the clock on the token device (in this case your smart-phone or tablet) and the authenticating system (in this case the OpenVPN server). If the clocks are different by more than a few seconds or so, it will break your authentication.

Thought I would post this one quickly… Having trouble getting OpenVPN to start/work for you and you are seeing this error in your logs? “TCP/UDP: Socket bind failed on local address” The resolution is pretty simple. Try changing the port you have assigned to openVPN in your config file and restarting the service. Most likely […]

I am not sure when OpenVPN added multi-factor support to their Access Server but I am thrilled that they did. It must have been recently (within the last few weeks or months) as I was using OpenVPN Access Server about 4 months ago as a temporary solution while my main solution was down and it […]

In a previous post I dealt with setting up an OpenVPN Community Edition server which is the free version of OpenVPN. I had initially hoped to use Authy for two-factor authentication in addition to LDAP but later found out that wasn’t going to work. So now I am looking at using DUO for two-factor authentication […]

After having already gotten a full page into writing a walkthrough (not to mention hours already spent with Authy) I found out that Authy will NOT WORK with OpenVPN and LDAP authentication unless the folks at Authy customize the ldap module for you. Which requires enterprise support, at a retail price of $500/month! Which was […]

INTRODUCTION I wrestled with getting OpenVPN to work with Microsoft Active Directory authentication better part of 2 days. I was surprised that it was so hard to find a straightfoward tutorial on the topic that actually worked! I had to do a lot of Google-Fu and look at many different pages to put together what […]

- « Previous

- 1

- 2